Who is Codingscape?

Codingscape is a modern consultancy that solves global technology problems while putting people first. They build custom software and solutions for industry leaders like Zappos, Twilio, and Veho. Instead of offshoring their software development or hiring internally, companies partner with Codingscape to deploy senior software teams in 4-6 weeks.

What brought them to Vellum?

The demand for custom AI solutions skyrocketed in 2023 and Codingscape has been looking for the right AI tech stack to build and deploy AI apps.

Before Codingscape discovered Vellum, they used open-sourced LLM frameworks to build these apps for their clients. While these frameworks provided an initial entry point into AI app development, the process lacked scalability and efficiency. Their engineers took a long time to ramp up with new AI processes, such as evaluating prompts, setting up vector databases, or handling complex RAG structures.

They needed tooling to expedite this process and enable iterative testing of different prompts and models before they’re pushed into production.

We sat down with Chris Shepherd, Codingscape’s AI Product Manager, to learn more — here’s Codingscape’s journey from having less time and resources to build AI apps to being able to win more deals and scale their AI efforts.

How Codingscape uses Vellum today?

Codingscape initially used Vellum to build a simple AI-powered quality assurance app. Once they learned the basics, they started using Vellum to rapidly create additional AI apps.

Vellum’s Workflow feature made it easier for their engineers to build and reuse advanced LLM chains, and the Prompt Sandbox helped with prompt and model evaluation.

Chris is reminded of the effort it took him to build a prototype with other frameworks and how much faster the process is for other engineers now.

As a result of this, they’ve been a happy customer for over a year now, and built many apps:

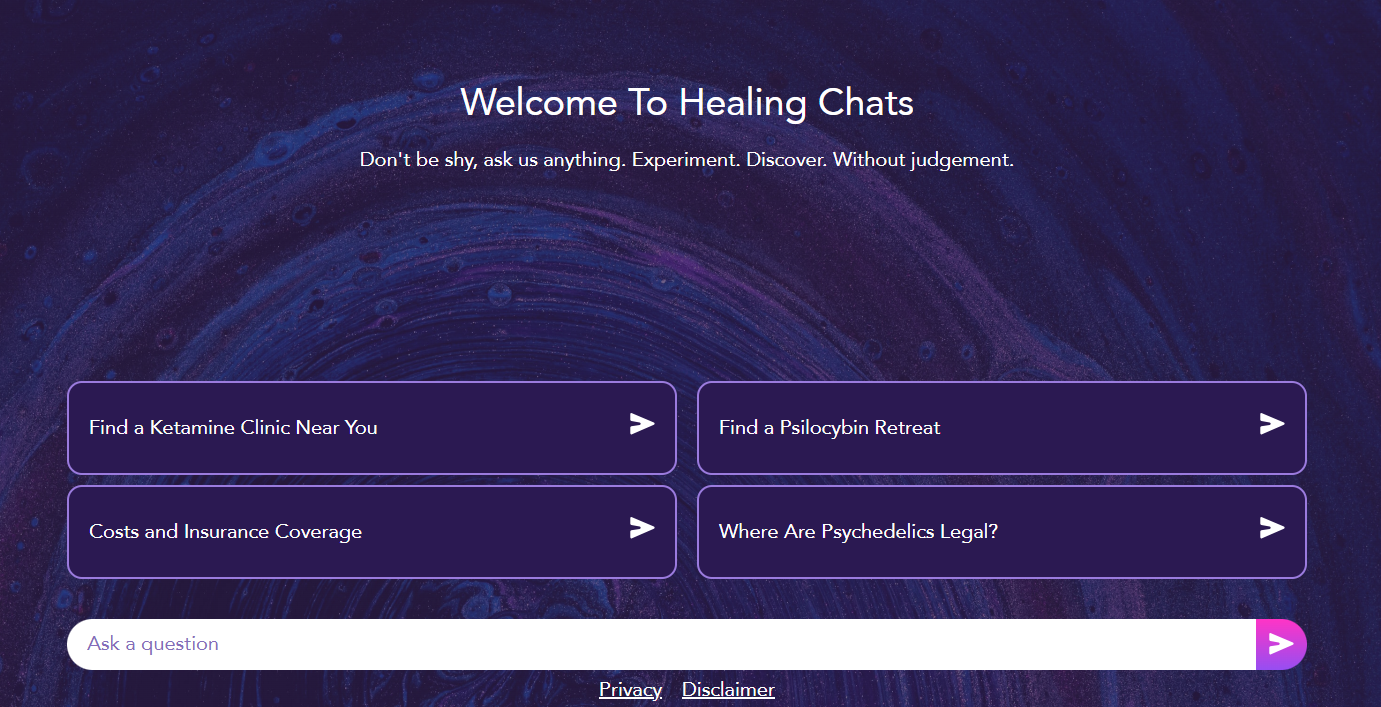

Healthcare resources chatbot for women that references PubMed articles to respond to user inquiries on women’s health. Medical assistance c hatbot that helps you find ketamine clinics near you and psychedelic therapies around the globe . TeleTexter, a Google Chrome extension, that summarizes all your open browser tabs into usable text with links for email newsletters.

They quickly shipped some meaningful internal tools such as:

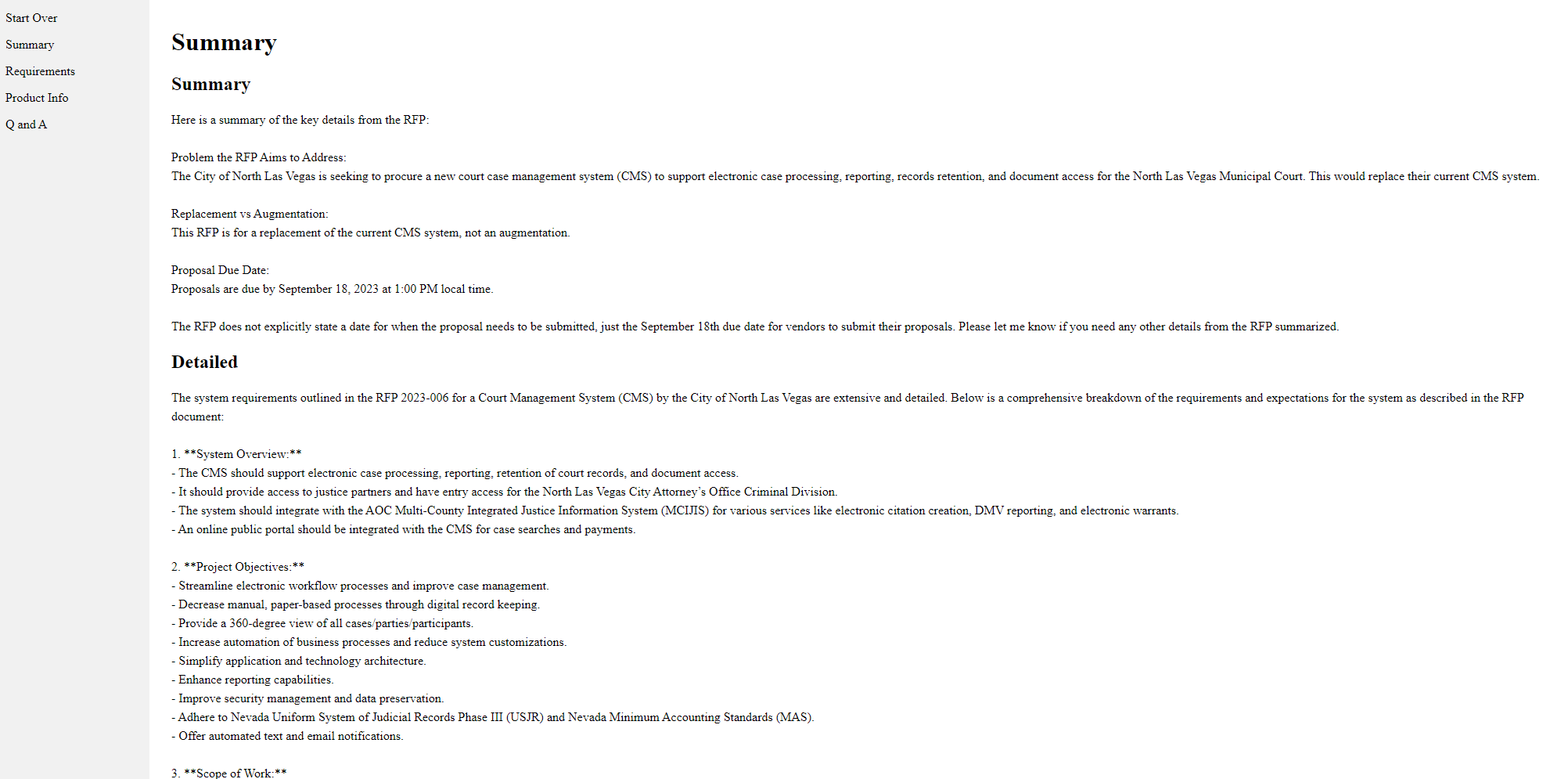

Resume parser chatbot that stores employee resumes, allowing quick chatbot-assisted matching of developers to new projects. This enabled their PM teams to match the right developer with a new project much faster. Gov RFP tool that analyzes government request-for-proposal (RFP) documents, generates summaries of the work required in the project, and generates proposals for submission based on RFP requirements.

What impact has this partnership had on Codingscape?

Vellum makes it faster for Codingscape to onboard senior software engineers to AI development, get them to experiment with new features, and deploy AI apps in production.

Now Codingscape can deliver new AI apps faster and secure more engagements with technology partners that need AI software development resources.

Vellum makes it easier to deliver reliable AI apps to our partners and train senior software engineers on emerging AI capabilities. Both are crucial to our business and we’re happy to have a tool that checks both boxes. - Chris Shepherd

There are some other secondary benefits to this collaboration, that Chris also mentioned:

Quick access to new AI capabilities in Vellum: New LLMs and AI capabilities are released all the time. Vellum gives Chris and the Codingscape team access to new AI capabilities so they can update production AI apps with the latest technology without having to rebuild apps from the ground up. Unified AI tooling speeds up product delivery: With everything they need to build production AI apps in one place, Codingscape can deliver reliable AI apps faster. Vellum’s centralized AI toolset increases Codingscape’s capacity to deliver valuable AI development services and win more engagements. Easy-to-build repeatable AI processes: Codingscape developers don’t have to worry about which vector database to use or how to build LLM chains manually for every app. They use Vellum Workflows so that once they’ve built a chatbot it’s easy to reference and extend the logic to new products for other partners. First class customer support: Codingscape’s engineers have immediate access to customer support from Vellum in a shared Slack channel that helped them build new apps faster. Troubleshooting happens quickly and Vellum’s CTO and CEO sometimes help answer engineers' questions directly.

We enjoy collaborating with Codingscape and are always striving to improve our product to better suit their needs.

Want to try out Vellum?

Vellum has enabled more than 100 companies to prototype faster, evaluate their prompts and ship production-grade AI apps.

If you're looking to incorporate LLM capabilities into your app and want to empower your software engineers, we're here to help you.

Request a demo for our app here or reach out to us at support@vellum.ai if you have any questions.

We’re excited to see what you and your team builds with Vellum next!