Quick overview

LangChain is a popular framework made for developers to build AI applications. This guide helps you compare the best LangChain alternatives for building, deploying, and managing AI agents and workflows.

Top LangChain alternatives shortlist

Best overall alternative: Vellum AI for enterprise-grade collaboration, observability, governance, and deployment flexibility. Top cloud-native choices: Vertex AI Agent Builder, Azure Copilot Studio, AWS Bedrock AgentCore. Best open-source: Haystack, LlamaIndex, Flowise, Superagent, CrewAI. For workflow automation: n8n and Zapier with AI extensions.

What is an AI agent framework?

An AI agent framework is software that helps teams, especially developers build, orchestrate, and deploy autonomous or semi-autonomous agents. It provides workflow automation, memory, tool integrations, and runtime controls to run reliable multi-step processes.

Why use AI agent frameworks?

AI agent frameworks quickly turn scattered prototypes into production systems. Here are the benefits you can expect from using an AI agent framework:

Accelerate time-to-market Ship reliable, observable production workflows Enable multi-agent collaboration and orchestration Gain enterprise governance, versioning, and audit-ability

Who needs AI agent frameworks?

Any developer team moving from AI idea to AI agents with deep business impact benefits. Ideally your AI agent framework can support more teams in your org, rather than just catering to developers. Teams like FP&A, Product, Data Science, etc. should be able to collaborate with developers to make AI agents.

What makes an ideal AI agent framework?

The best frameworks are modular and observable, with governance you can take to audit and deployment options that fit your stack.

An AI agent platform often offers a richer agent building experience with SDKs and visual builder, so both technical and non-technical teams can ship quickly without producing more overhead for engineers.

Here’s what to look for in an ideal AI agent framework/platform:

Cross-team collaboration: Shared workspaces and role-based access that enable teams to co-build, review, and deploy agents without silos. Developer Necessities: Unified SDKs, custom code features, and strong documentation Observability: Logs, traces, and evaluation tools Governance: RBAC, audit logs, and compliance features Flexible Deployment: Cloud, VPC, or on-prem Integrations: Connectors for tools and APIs

Key trends shaping 2026

Multi-agent orchestration: Enterprises are scaling from single-agent pilots to dozens of coordinated agent systems, with initiatives like Salesforce and Google’s Agent-to-Agent (A2A) standard showing the push toward collaboration at scale [1] . Enterprise governance: Regulatory pressure is forcing enterprises to emphasize RBAC, audit trails, and compliance logging as core features of AI platforms [2] . Visual/low-code: Low and no-code platforms remain a top enterprise investment category for 2025, helping accelerate AI prototyping and delivery across teams [3] . Open-source dominance: OSS underpins most production workloads, with surveys showing 90%+ of enterprises depend on open-source software in production [4] . Vendor-managed runtimes: Vendor-managed AI platforms are gaining traction in regulated industries where compliance burden is highest, even if adoption multiples vary by sector [5] .

Why use LangChain alternatives?

Choosing the right LangChain alternative is about finding a platform that better fits your team’s compliance, deployment, and integration needs. Here’s the top reasons to choose an alternative:

Faster building for developers, and un-gating agent building for non-technical teams Built-in observability and evaluation for safe rollouts Broader ecosystem integration (M365, AWS, GCP) Enhanced security and governance protocols(RBAC, audit logs) Flexible deployment (SaaS, VPC, on‑prem)

Who Needs LangChain Alternatives?

Teams focused on collaborative AI building across technical and non-technical roles Organizations aiming to become AI-native Enterprises with strict data residency and compliance Teams deploying agents across multiple clouds or regions IT leaders requiring robust monitoring and versioning Developers seeking model/tool neutrality Regulated industries (finance, healthcare) needing auditability

How to Evaluate LangChain Alternatives

Use these criteria to select the right LangChain alternative for your organization:

Criterion Description Why It Matters Cross-Team Collaboration Shared workspaces, role-based access, review/approval flows, and visual builders for non-devs Aligns product, data, and business to co-build—and ship agents faster with fewer handoffs Modularity Swappable, composable components for models, tools, memory, and routing Enables customization and scaling without rewrites Observability Tracing, logs, metrics, eval harnesses, and regression alerts Shortens MTTR; builds trust in outputs Governance RBAC, audit logs, change history, approvals, HITL Mandatory for enterprise and regulated use Deployment Options Cloud, VPC, or on-prem; secrets and data residency controls Fits diverse IT and compliance requirements Integration Connectors/SDKs for internal tools, RAG, and external APIs Reduces glue code and maintenance Developer Experience Unified SDKs, clear docs, visual builder, CI hooks Speeds onboarding and iteration Performance Latency, throughput, horizontal scaling patterns Impacts UX and cost Cost Pricing model and total cost of ownership (infra + people) Determines long-term feasibility

How We Chose the Best LangChain Alternatives

We evaluated platforms on:

Ease and depth of building Collaboration enablement Enterprise deployment and security features Observability and evaluation capabilities Breadth of ecosystem integrations Scalability and operational maturity Balance of open-source flexibility and managed support

Expected trade-offs:

Managed vs self-hosted: ease vs control Open-source vs proprietary: flexibility vs SLAs Depth of integration vs neutrality: ecosystem fit vs portability Feature richness vs simplicity: capability vs complexity

Top 15 LangChain Alternatives in 2026

1. Vellum AI — The Best LangChain Alternative in 2026

Quick Overview : Vellum AI is the easiest way for businesses and AI builders to create production-ready AI agents without the engineering overhead LangChain often requires. Its prompt-to-agent builder generates full agent scaffolds from natural language, the visual editor makes iteration fast for non-technical teammates, and Python SDK gives developers the depth they need. With built-in evaluations, versioning, and full observability, Vellum helps teams ship reliable agents quickly. Once agents are created, they can be packaged into AI Apps so anyone across the business can run and reuse automations safely.

Best For: Businesses and AI builders who want a collaborative, reliable, and easier-to-manage alternative to LangChain for building and scaling AI agents.

Pros:

Agent Builder for fast, prompt-based agent creation AI Apps that let anyone in the business run and reuse automations safely Built-in evaluations, regression tests, and versioning End-to-end observability for fast debugging and monitoring Visual builder plus TypeScript/Python SDK for deeper customization Governance features like RBAC, audit logs, and environment control Flexible deployment options: SaaS, private VPC, or on-prem Safe, fast iteration and controlled promotion of changes

Cons:

Some advanced SDK features still require engineering support New features may require light relearning as the platform evolves

Pricing:

Free tier available; paid plans start at $25 per month; enterprise plans available.

2. Vertex AI Agent Builder (Google Cloud) — Cloud-Native Agent Platform

Quick overview: Vertex AI Agent Builder is part of Google Cloud’s AI stack, offering scalable deployment with native GCP integrations. Strong fit for enterprises standardizing on Google infrastructure.

Best for: Organizations using Google Cloud for AI agent deployment

Pros:

Deep integration with Google Cloud services Managed infrastructure and scalability Access to Vertex AI models and tools

Cons:

Limited deployment flexibility (cloud-only) Less control over observability compared to Vellum

Pricing: Usage-based (compute, storage, API).

3. Microsoft Azure Copilot Studio — Agentic AI in the Microsoft Ecosystem

Quick overview: Microsoft Azure Copilot Studio is deeply tied into Microsoft 365 and Azure services, with enterprise security and compliance. Designed for organizations already embedded in the Microsoft ecosystem.

Best for: Enterprises leveraging Microsoft 365 and Azure

Pros:

Seamless integration with Microsoft 365 and Teams Enterprise security and compliance Visual builder for agent workflows

Cons:

Locked into Azure ecosystem Limited model/tool neutrality

Pricing: Enterprise licensing.

4. AWS Bedrock AgentCore — Scalable Agent Orchestration on AWS

Quick overview: AWS Bedrock AgentCore provides native agent orchestration on AWS with managed runtimes and access to multiple foundation models. It’s ideal for enterprises already standardized on AWS, though limited to cloud-only deployment with fewer built-in evaluation tools.

Best for: Teams building AI agents on AWS infrastructure

Pros:

Native AWS service integration Managed runtime and scaling Access to multiple foundation models

Cons:

AWS-only deployment Fewer built-in evaluation tools than Vellum

Pricing: Usage-based; varies by model and compute

5. n8n — Open-Source Workflow Automation with Agent Extensions

Quick overview: n8n is an open-source automation platform that combines AI agents with traditional SaaS workflows. With a low-code visual builder and hundreds of integrations, it’s a versatile option for both developers and operations teams. It can run self-hosted or in the cloud, though advanced AI features often require scripting.

Best for: Developers wanting open-source workflow automation with AI

Pros:

Open-source and self-hostable Large library of integrations Flexible workflow builder

Cons:

Lacks enterprise-grade observability Manual scaling and governance setup

Pricing: Free (OSS); Cloud from $20/month; Enterprise pricing available

6. Zapier — No-Code Automation with AI Capabilities

Quick overview: Zapier is a no-code automation leader that connects thousands of apps, now with AI integrations. It’s designed for business users to quickly set up workflows without technical expertise. While great for simple automations, it lacks deep agent orchestration capabilities.

Best for: Business users automating workflows with minimal coding

Pros:

Huge app ecosystem Easy-to-use, no-code interface Quick setup for simple automations

Cons:

Limited agent orchestration depth Lacks advanced evaluation and governance

Pricing: Free tier; paid plans from $19.99/month; Enterprise pricing available

7. Lindy AI — Personal AI Assistant Platform

Quick overview: Lindy AI is a lightweight platform to spin up conversational or workflow agents fast. Comes with templates and SaaS integrations, making it accessible for non-technical teams.

Best for: Individuals and teams building personal AI assistants

Pros:

Prebuilt agent templates Integrates with calendar, email, and more Simple onboarding

Cons:

Limited enterprise controls Fewer deployment options

Pricing: Starts at $25/month; Enterprise pricing available

8. Gumloop — Visual Agent Builder for Prototyping and Deployment

Quick overview: Gumloop is focused on rapid prototyping and sharing custom AI agents. Ideal for experimentation, proof-of-concepts, and testing agent ideas quickly.

Best For : Rapid prototyping and deploying custom AI agents.

Pros:

Visual builder for fast prototyping RAG support out of the box Collaboration features

Cons:

Limited enterprise deployment options Fewer governance features

Pricing : Free tier, paid plans from $37/month; Enterprise pricing available

9. Stack AI — SDK for Custom AI Agent Development

Quick overview: Stack AI provides a visual interface to connect databases, APIs, and workflows. Tailored for building internal AI-powered tools that support business operations.

Best for: Developers needing a flexible SDK for custom agent logic

Pros :

Visual workflow editor Connects to databases and APIs Simple deployment options

Cons :

Limited observability and evaluation features Fewer governance controls

Pricing : Free tier; Enterprise plan

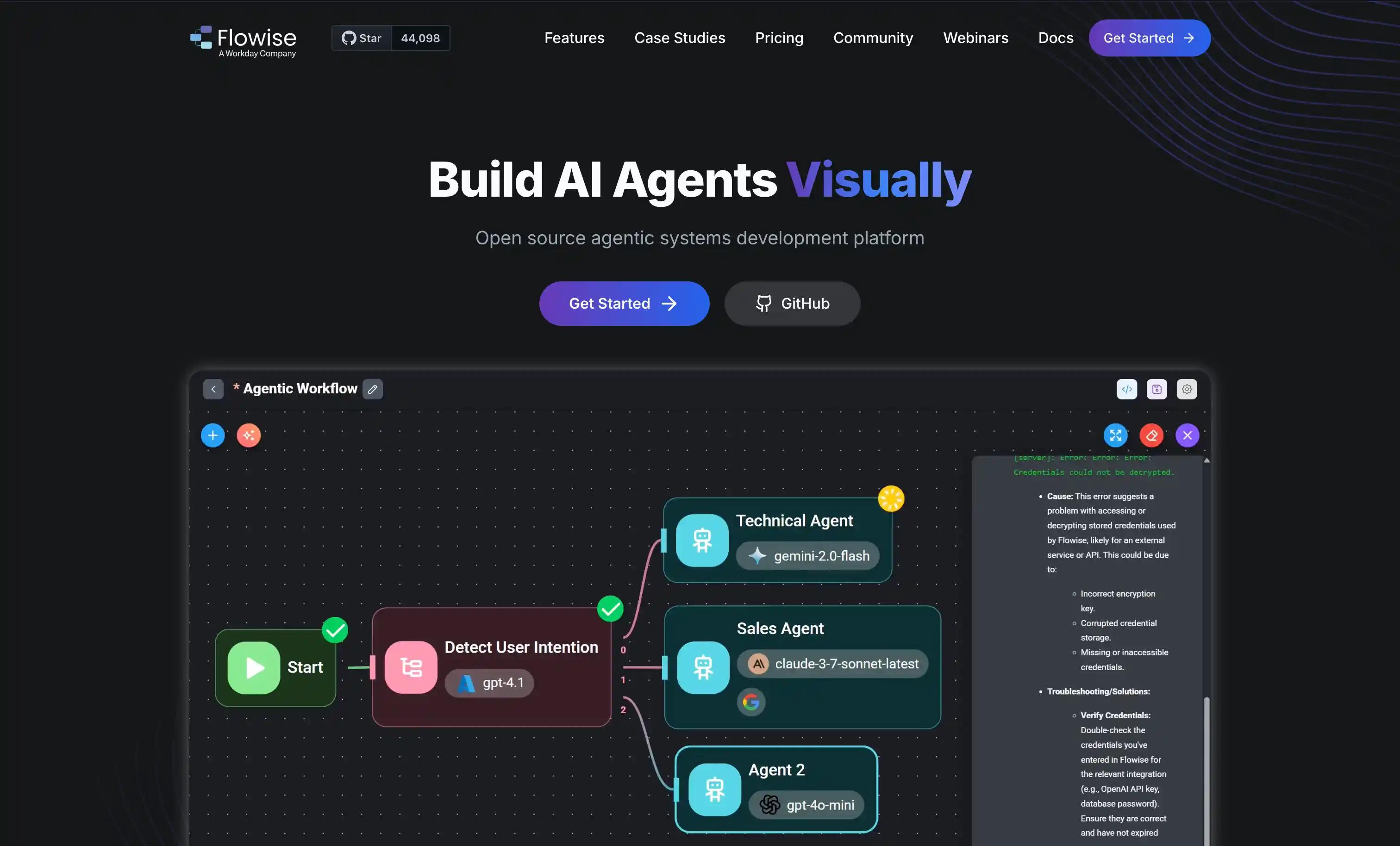

10. Flowise AI — OSS Visual LLM Orchestration

Quick Overview: Flowise AI is an open-source, drag-and-drop LLM orchestration tool best for rapid prototyping and OSS control.

Best for: Teams wanting open-source, visual LLM orchestration

Pros:

Open-source, self-hostable Visual workflow builder Active community

Cons:

Fewer enterprise controls Manual scaling and monitoring

Pricing: Free (OSS); paid plans from $35/month; Enterprise pricing available

11. Superagent — OSS Agent Framework for Developers

Quick overview: Superagent is a developer-first, open-source agent framework with plugin support. Ideal for engineering-heavy teams wanting maximum customization.

Best For : Developers building custom agent solutions.

Pros:

Modular agent framework Community plugins Flexible deployment

Cons:

Lacks built-in governance Limited observability

Pricing: Free (OSS)

12. CrewAI — Visual Builder for Multi-Agent Orchestration

Quick overview: CrewAI specializes in designing teams of role-based agents through a visual workflow interface. It helps teams prototype and deploy collaborative agent flows quickly, without heavy coding. While easy to use, advanced observability and governance features are limited.

Best for: Designing collaborative agent teams with roles

Pros:

Visual workflow builder Role-based agent collaboration Quick prototyping

Cons:

Limited advanced observability Freemium model restricts some features

Pricing: Enterprise only.

13. Dust — AI Workflow Builder for Enterprises

Quick overview: Dust is an enterprise AI platform for building custom, contextual agents that connect to your company’s data and tools in a shared workspace, with a model-agnostic approach and security/compliance features.

Best for: Security-conscious enterprises to roll out data-connected, agents without heavy engineering.

Pros:

Visual workflow builder Integrations with enterprise data sources Managed hosting

Cons:

Limited open-source options Fewer observability features

Pricing: $29/month; Enterprise pricing available

14. Relevance AI — Multi-Agent Orchestration with Analytics

Quick overview: Relevance AI helps teams build and manage multi-agent workflows with built-in RAG, analytics, and dashboards for visibility.

Best for: Teams that want low-code agent workflows powered by data and real-time analytics.

Pros:

Built-in analytics and tracing RAG and agent orchestration Cloud deployment

Cons:

Limited deployment flexibility Fewer governance controls

Pricing: Free tier; paid plans starting at $19/month; Enterprise pricing available

15. OpenPipe — OSS Agent Orchestration for LLMs

Quick overview: OpenPipe is an open-source platform for fine-tuning and optimizing LLM prompts and agents, with tools for regression testing, evaluation, and versioning. It’s best for developers who want full control over agent orchestration and improvement in a self-hosted setup.

Best for: Developers seeking open-source agent orchestration

Pros:

Open-source Flexible agent building Community support

Cons:

No managed hosting Lacks enterprise-grade features

Pricing: Free (OSS); Enterprise pricing available

LangChain Alternatives Comparison Table

Tool Name Starting Price Key Features Best Use Case Rating Vellum AI Free tier; Enterprise Prompt-based agent building; AI Apps; built-in evals & versioning; full observability; visual builder + SDK; governance controls (RBAC, audit logs); flexible deploy (SaaS/VPC/on-prem) Businesses & AI builders needing a reliable, collaborative alternative to LangChain for production-ready agents ★★★★★ Vertex AI Agent Builder Usage-based GCP integration, scalable runtime Cloud-native agent deployment (GCP) ★★★★☆ Azure Copilot Studio Enterprise licensing M365/Teams integration, visual builder Microsoft ecosystem agent workflows ★★★★☆ AWS Bedrock AgentCore Usage-based AWS integration, managed scaling AWS-native agent orchestration ★★★★☆ n8n Free (OSS); Cloud from $20/mo OSS, integrations, workflow builder Open-source workflow automation ★★★★☆ Zapier Free; from $19.99/mo No-code, app integrations Business workflow automation ★★★★ Lindy AI From $25/mo Assistant templates, SaaS integrations Personal AI assistants ★★★★ Gumloop Free; from $37/mo Visual builder, RAG support RAG prototyping ★★★★ Stack AI Free; Enterprise SDK, model-agnostic, DB/API connectors Custom agent SDK development ★★★★ Flowise AI Free (OSS); from $35/mo Visual LLM orchestration (OSS) Visual open-source LLM workflows ★★★★ Superagent Free (OSS) Modular agent framework, plugins OSS agentic LLM apps ★★★★ CrewAI Enterprise only Visual builder, multi-agent orchestration Designing role-based agent teams ★★★★ Dust From $29/mo; Enterprise Visual builder, enterprise integrations Enterprise AI workflows ★★★★ Relevance AI Free; from $19/mo; Enterprise Analytics, RAG, agent orchestration Analytics-driven RAG/agent workflows ★★★★ OpenPipe Free (OSS); Enterprise OSS agent orchestration, flexibility OSS agent orchestration ★★★★

Why Choose Vellum

Vellum removes the friction of learning LangChain that inevitably slows teams down. Developers get the same fine-grained control via Vellum’s SDKs, while the Agent Builder in our visual workflow sandbox that lets product, data, and ops teams co-build agents in minutes without extra engineering overhead.

On top of speed, Vellum bakes in the enterprise must-haves LangChain leaves to manual setup: built-in evaluations and versioning , end-to-end observability (traces, logs, cost/latency), and governance with RBAC, audit logs, approvals, and HITL.

{{time-cta}}

What makes Vellum different

Ultra-fast building : Launch agents in minutes with natural language using Vellum's agent builder. No dragging and dropping nodes or code required. Built-in evaluations & versioning : Define small test sets, compare variants side-by-side, promote only what passes, and roll back safely. End-to-end observability : Trace every run at node and workflow levels, track cost/latency, and catch regressions before they hit users. Collaboration environment : Shared canvas with comments, role-based reviews/approvals, change history, and human-in-the-loop steps so PMs, SMEs, and engineers can build together. Developer depth when needed : TypeScript/Python SDKs, custom nodes, exportable code, and CI hooks to fit into existing pipelines. Governance-ready : RBAC, environments, audit logs, and secrets management to meet enterprise compliance. Flexible deployment : Run in cloud, VPC, or on-prem so data stays where it belongs. AI-native primitives : Semantic routing, tool calling, decisioning, and approvals as first-class features.

When Vellum is the best fit

Cross-functional collaboration: When PMs, SMEs, and engineers need a shared workspace with RBAC, reviews, and approvals to co-build agents. Enterprise-grade governance: If your org requires audit logs, HITL, environments, and compliance-ready controls out of the box. Fast, safe iteration: When you need to prototype quickly with Agent Builder but still rely on built-in evaluations, versioning, and rollbacks. Flexible, secure deployment: If strict data residency or IT policies demand SaaS, VPC, or on-prem options without lock-in.

How Vellum compares (at a glance)

Comparison Vellum Advantage Vellum vs LangChain Built-in evaluations, versioning, observability, and enterprise governance out of the box—so teams move from prototype to production safely. Vellum vs Cloud-Native Platforms (Vertex AI Agent Builder, Microsoft Azure Copilot Studio, AWS Bedrock AgentCore) Cloud-agnostic deployment (SaaS, VPC, on-prem) with evaluations, observability, and governance included—no single-vendor lock-in. Vellum vs Workflow/Automation Tools (n8n, Zapier, Gumloop, Lindy) Purpose-built for AI agents with RBAC, audit logs, evaluations, tracing, and rollback—capabilities simple automators and lightweight builders lack. Vellum vs Open-Source & Niche Frameworks (Stack AI, Flowise, Superagent, CrewAI, Dust, Relevance AI, OpenPipe) Enterprise-ready platform with shared workspaces, CI hooks, governance, and deploy-anywhere options—without stitching together OSS or analytics-first tools.

Ready to become AI-native on Vellum?

Start free and see how Vellum’s shared workspace, evals, and RBAC let teams co-build agents faster, without extra engineering overhead.

{{general-cta}}

FAQs

1) What is the main limitation of LangChain for enterprise teams?

LangChain is strong for developer prototyping, but light on built-in governance, observability, and deployment flexibility. Vellum ships these out of the box so enterprises can move from pilot to production faster.

2) Do LangChain alternatives make collaboration easier?

Yes, platforms with shared workspaces and approvals help non-devs contribute; Vellum adds RBAC, audit trails, and a visual Agent Builder so PMs/SMEs co-build without blocking engineering.

3) How do LangChain alternatives reduce engineering overhead?

By bundling testing, versioning, tracing, and rollback all in one place. Vellum centralizes these controls so engineers spend less time building scaffolding and more time improving outcomes.

4) Why does deployment flexibility matter when choosing an alternative?

Some industries require strict data residency or on-prem hosting. Platforms that offer SaaS, VPC, and on-prem deployment prevent compliance issues down the line.

5) Can non-technical teams build agents without coding?

Yes, low-code builders help, but quality hinges on guardrails; Vellum’s visual builder includes an Agent Builder that turns prompts into AI agents. It pairs with eval gates and approvals, so non-technical teams can ship safely.

6) How do LangChain alternatives handle security and compliance?

Look for RBAC, audit logs, environment isolation, and approvals as core primitives, not plugins, especially for heavily regulated and compliance reliant industries.

7) Are open-source options better than managed platforms?

Open-source tools offer flexibility and control, but managed platforms often save time with built-in monitoring, governance, and enterprise support.

8) How do evaluation tools in alternatives improve reliability?

Built-in evals allow teams to test agents before rollout, compare changes over time, and prevent regressions from reaching production.

9) What role does observability play in scaling agents?

Logs, traces, and cost tracking help teams quickly debug issues and manage performance, which becomes critical as usage grows.

10) When is Vellum the right choice among LangChain alternatives?

Choose Vellum when you need developer-grade SDK control plus cross-team collaboration, with evaluations, observability, governance, and deploy-anywhere options built in.

Extra Resources

How the best teams ship AI solutions → 2026 Guide to AI Agent Workflows → Ultimate LLM Agent Build Guide → Understanding agentic behavior in production → The Best AI Agent Frameworks For Developers →

Citations

[1] Google Cloud. (2025). Agent2Agent protocol is getting an upgrade .

[2] KPMG. (2025). Ten Key Regulatory Challenges: 2025 Mid-Year .

[3] Forrester. (2025). The State Of Low-Code, Global 2025 .

[4] OpenLogic. (2025). 2025 State of Open Source Report .

[5] Productive/edge. (2025). Gartner’s Top 10 Tech Trends Of 2025: Agentic AI and Beyond .