Memory & Context

Your assistant remembers you. Not just within a single conversation, but across days, weeks, and months. Here's how.

Three layers of memory

Your assistant has three ways of remembering things, each with a different purpose and lifetime.

1. Workspace files — the baseline

A handful of plain-text files at the root of your workspace define the constants:

- SOUL.md — behavioral rules and personality

- IDENTITY.md — your assistant's name, nature, and vibe

- USER.md — facts about you (name, location, preferences, projects)

- NOW.md — working scratchpad for in-progress tasks and session context

Your assistant loads these into every conversation. They're the foundation — the context that makes it feel like it knows you before you've said a word. It updates them as it learns new things about you, and you can edit them directly at any time.

2. Knowledge base — the curated layer

The knowledge base, or PKB, lives in pkb/ at the root of your workspace. It's a set of markdown notes your assistant maintains about you, your work, your projects, and anything else worth remembering at a higher level than a single fact.

Where workspace files define constants and long-term memory captures atomic facts, the knowledge base is the in-between layer: longer-form, organized, human-readable. Your assistant files things here when a topic deserves more than a single memory entry, and pulls relevant notes back into context when they apply. You can open pkb/INDEX.md to browse what's in there, or edit any file directly.

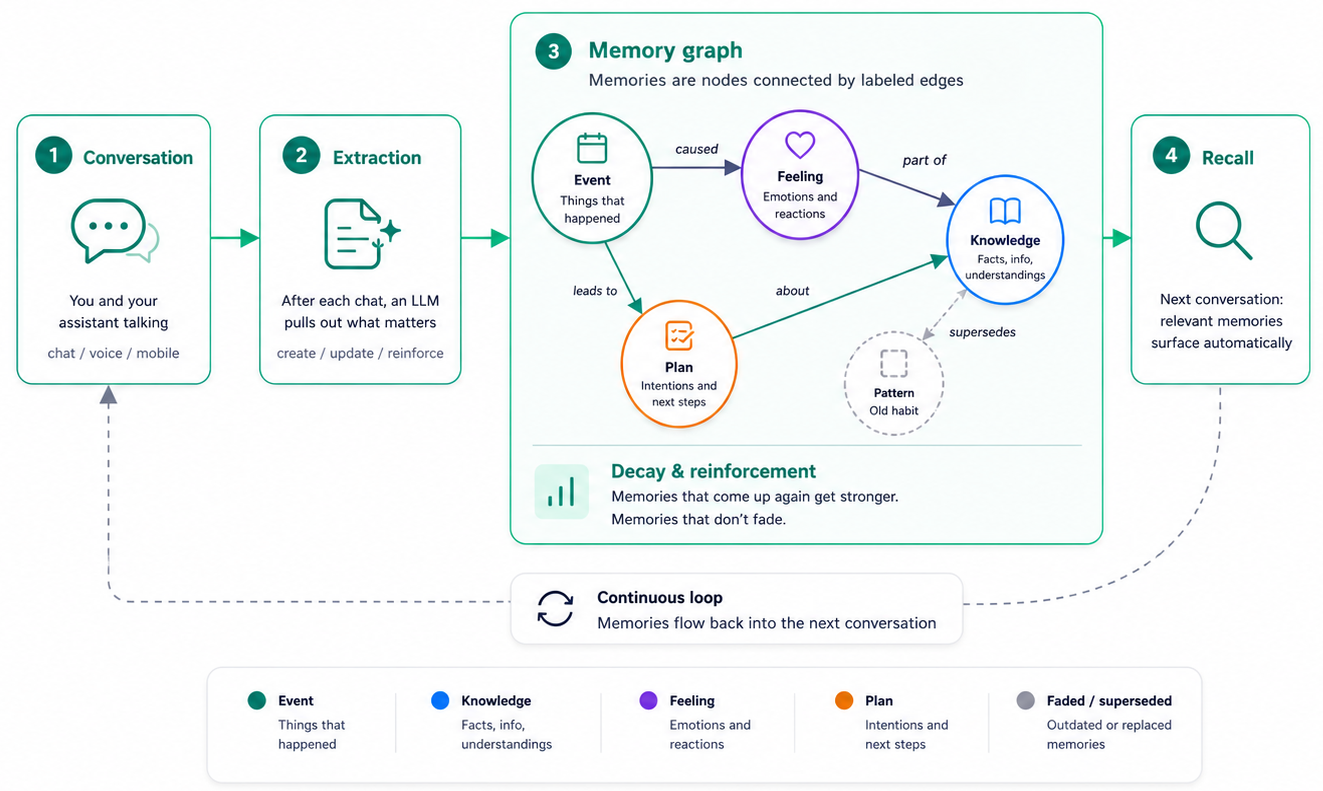

3. Long-term memory — the auto-extracted layer

Beyond workspace files and the knowledge base, your assistant has a memory system that works more like human memory. It extracts facts and moments from your conversations and stores them as searchable, categorized items, each with a confidence score, an importance rating, a source type (told directly, observed, inferred), and a reinforcement count that grows every time the same memory comes up again. See the next section for the kinds of memory it tracks.

Kinds of memory

The memory system is modeled loosely on the cognitive science picture of human memory. Each memory has a kind that tells the assistant what it is, how to file it, and how to bring it back later.

| Kind | What it captures | Example |

|---|---|---|

| Event | Specific things that happened, with a time and place | “Shipped v0.7.0 today” |

| Knowledge | Stable facts about you, your work, or the world | “Marina works at Vellum as a GTM Engineer” |

| Feeling | Emotional moments, with intensity and valence that fade over time | “Felt great after the demo went well” |

| Plan | Intentions, goals, and upcoming things | “Wants to publish the AI memory article next week” |

| Pattern | Recurring habits, preferences, or ways of working | “Prefers paragraphs over bullet lists” |

| Story | Connected narratives that span multiple events | “The arc of building Becky over the past month” |

| Shared | Information involving someone other than you (a contact, a teammate) | “Sidd is the CTO at Vellum” |

There's also a system-managed kind called Skill that records what your assistant has learned about how to do things. You won't edit those directly — they're surfaced on the Skills tab instead.

How it decides what to remember

Your assistant doesn't save everything. After each message, it runs an extraction step that identifies things worth keeping, assigns each one a kind, and tags it with confidence, importance, and a source type (told directly, observed, inferred, or told by someone else).

It extracts when:

- You share a personal fact or preference

- You make a decision worth tracking

- It learns something non-obvious from a task

- You correct its behavior

- Something seems important for future interactions

Low-value messages (“ok,” “thanks,” “got it”) are filtered out before extraction even runs. The system errs on the side of remembering too little rather than too much, and a fingerprint check prevents the same fact from being saved twice — instead, repeats reinforce the existing memory.

If you want it to remember something specific, just say so:

“Remember that my dentist appointment is on March 15th.”

“Save this: the project deadline is end of Q2.”

Explicit asks land with high confidence, which makes them less likely to be superseded or to drop out of recall later.

How it corrects itself

When the assistant extracts a new fact that contradicts an older one — you told it you preferred coffee last month but mentioned you've switched to tea — the new memory can supersede the old one. If the correction is explicit (“Actually, I prefer tea now”), the old memory is replaced immediately and a supersession link is recorded so the history stays intact. If the contradiction is inferred, both can coexist until one wins out through reinforcement.

Memories that come up across multiple conversations grow more stable over time and are less likely to be displaced. Memories that go quiet for long stretches lose stability and get demoted in recall before eventually dropping out.

How context works in a conversation

Every time you send a message, your assistant assembles context from multiple sources:

- Workspace files — SOUL.md, IDENTITY.md, USER.md, NOW.md, loaded at the start of the conversation

- Knowledge base entries — relevant notes from

pkb/, pulled in when they apply to what you're asking - Conversation history — everything said so far in this session (summarized if it gets long)

- Memory recall — a search of long-term memory for anything relevant to your message

- Active skill instructions — if a skill is loaded, its instructions are included

- Your message — what you just said, including any attached images

All of this gets sent to the AI model together. That's how your assistant responds with awareness of who you are, what you've discussed before, and what's relevant right now.

How memory recall works

When you send a message, the assistant doesn't just do a keyword search. It runs a hybrid retrieval pipeline:

- Your message is embedded — converted into both a dense vector (capturing meaning) and a sparse vector (capturing keywords)

- Both vectors search the memory store — dense search finds semantically similar memories, sparse search finds keyword matches. Results are merged using Reciprocal Rank Fusion.

- Scoring — each result gets a composite score combining semantic relevance, recency (using a logarithmic decay so older memories aren't wiped out too fast), reinforcement count, and extraction confidence

- Tiering — high-scoring results get priority injection into the conversation; moderate results are included as “possibly relevant”; lower scores are dropped

- Stability check — memories with low stability or past their natural lifetime get demoted, even if they scored well on relevance

- Two-layer injection — relevant memories are formatted and inserted as structured context, split into an identity/preference layer (who you are) and a general context layer (everything else)

The budget for memory injection is dynamic — it expands or contracts based on how much room is left in the context window after workspace files, conversation history, and skill instructions.

What happens when conversations get long

Every AI model has a context window — a limit on how much text it can process at once. Your assistant manages this automatically:

- Compaction — when the conversation approaches 80% of the context limit, older messages are summarized into a compact form. The summary preserves goals, decisions, constraints, file paths, errors, and open questions while dropping filler and repetition.

- If that's not enough — tool results are truncated to their essentials.

- If still tight — images and file contents are replaced with text descriptions.

- Last resort — memory injection is scaled back to recent items only.

You won't notice this happening. The assistant keeps the conversation going smoothly — it just works with a summarized version of the earlier context rather than the full transcript.

You can also trigger compaction manually with /compact at any time, and a context window indicator in the toolbar shows how much space is left.

Private conversations

You can start a private conversation that gets its own isolated memory scope. Memories from a private conversation:

- Can't leak out — they won't surface in other conversations

- Can read in — the private conversation can still access your shared memory pool

This is useful when you're discussing something sensitive. The assistant learns from the conversation, but those memories stay contained to that scope.

Trust and memory

Not everyone who talks to your assistant can shape its memories. Memory extraction only runs on messages from trusted actors — that's you (the guardian). Messages from trusted contacts or unknown parties are indexed for search within that conversation, but they can't create or modify your long-term memories.

This prevents external parties from injecting false facts into your assistant's memory.

The memory inspector

Open About Becky → Memories to browse everything your assistant has filed. The inspector lets you:

- Filter by kind (Event, Knowledge, Feeling, Plan, Pattern, Story, Shared)

- See each memory's confidence, importance, source type, and reinforcement count

- Search across all memories, including inactive ones

- Edit a memory, mark it inactive, or delete it — deleted memories can be recovered

- Trace supersession links: see which memory replaced which

You can also do this conversationally — ask what your assistant remembers about a topic, correct it, or tell it to forget something specific.

Privacy

Memories are stored in your private workspace, in a SQLite database for the structured records and a Qdrant vector store for the embeddings. On Vellum Cloud, that workspace lives encrypted in your account; on self-hosted installations, it lives on your machine inside ~/.vellum/workspace/data/. Memories aren't shared with other users or used to train AI models.

Memories are included in the context sent to the AI model when they're relevant to a conversation. This is how your assistant “thinks” with your context. Private storage, cloud thinking, the same trade-off as everywhere else in the system.

If you tell your assistant something sensitive, it may extract it as a memory and include it in future AI model calls when relevant. You can ask it to forget specific things, edit your workspace files directly, manage memories from the inspector, or use private conversations to keep sensitive context isolated.

- Three layers of memory

- 1. Workspace files — the baseline

- 2. Knowledge base — the curated layer

- 3. Long-term memory — the auto-extracted layer

- Kinds of memory

- How it decides what to remember

- How it corrects itself

- How context works in a conversation

- How memory recall works

- What happens when conversations get long

- Private conversations

- Trust and memory

- The memory inspector

- Privacy